We explore large-scale training of generative models on video data. Specifically, we train text-conditional diffusion models jointly on videos and images of variable durations, resolutions and aspect ratios. We leverage a transformer architecture that operates on spacetime patches of video and image latent codes. Our largest model, Sora, is capable of generating a minute of high fidelity video. Our results suggest that scaling video generation models is a promising path towards building general purpose simulators of the physical world.

Video generation models as world simulators

This technical report focuses on (1) our method for turning visual data of all types into a unified representation that enables large-scale training of generative models, and (2) qualitative evaluation of Sora’s capabilities and limitations. Model and implementation details are not included in this report.

Much prior work has studied generative modeling of video data using a variety of methods, including recurrent networks, 1, 2, 3 generative adversarial networks, 4, 5, 6, 7 autoregressive transformers, 8 , 9 and diffusion models. 10, 11, 12 These works often focus on a narrow category of visual data, on shorter videos, or on videos of a fixed size. Sora is a generalist model of visual data—it can generate videos and images spanning diverse durations, aspect ratios and resolutions, up to a full minute of high definition video.

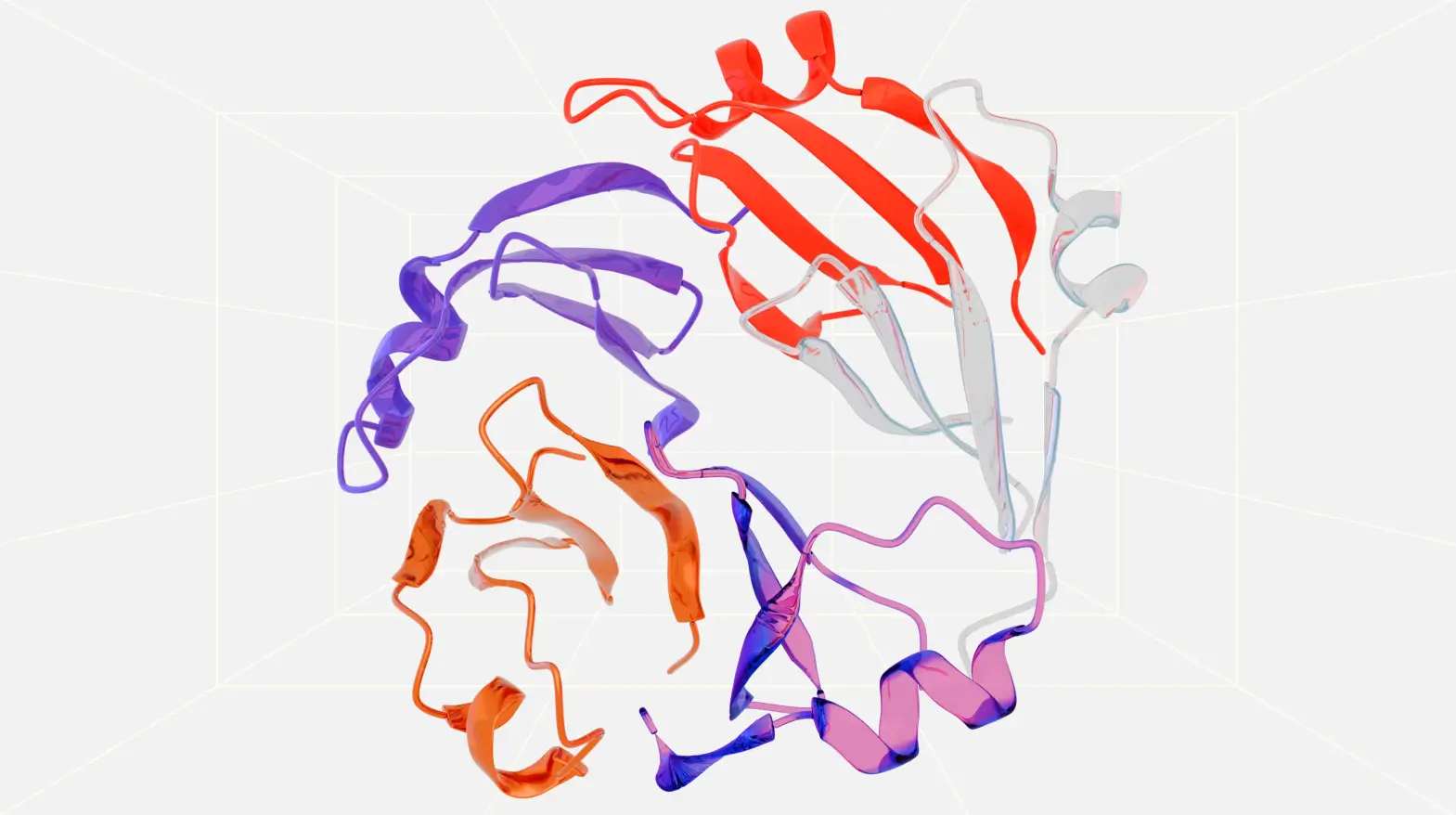

Turning visual data into patches

We take inspiration from large language models which acquire generalist capabilities by training on internet-scale data. 13, 14 The success of the LLM paradigm is enabled in part by the use of tokens that elegantly unify diverse modalities of text—code, math and various natural languages. In this work, we consider how generative models of visual data can inherit such benefits. Whereas LLMs have text tokens, Sora has visual patches. Patches have previously been shown to be an effective representation for models of visual data. 15, 16, 17, 18 We find that patches are a highly-scalable and effective representation for training generative models on diverse types of videos and images.

At a high level, we turn videos into patches by first compressing videos into a lower-dimensional latent space, 19 and subsequently decomposing the representation into spacetime patches.

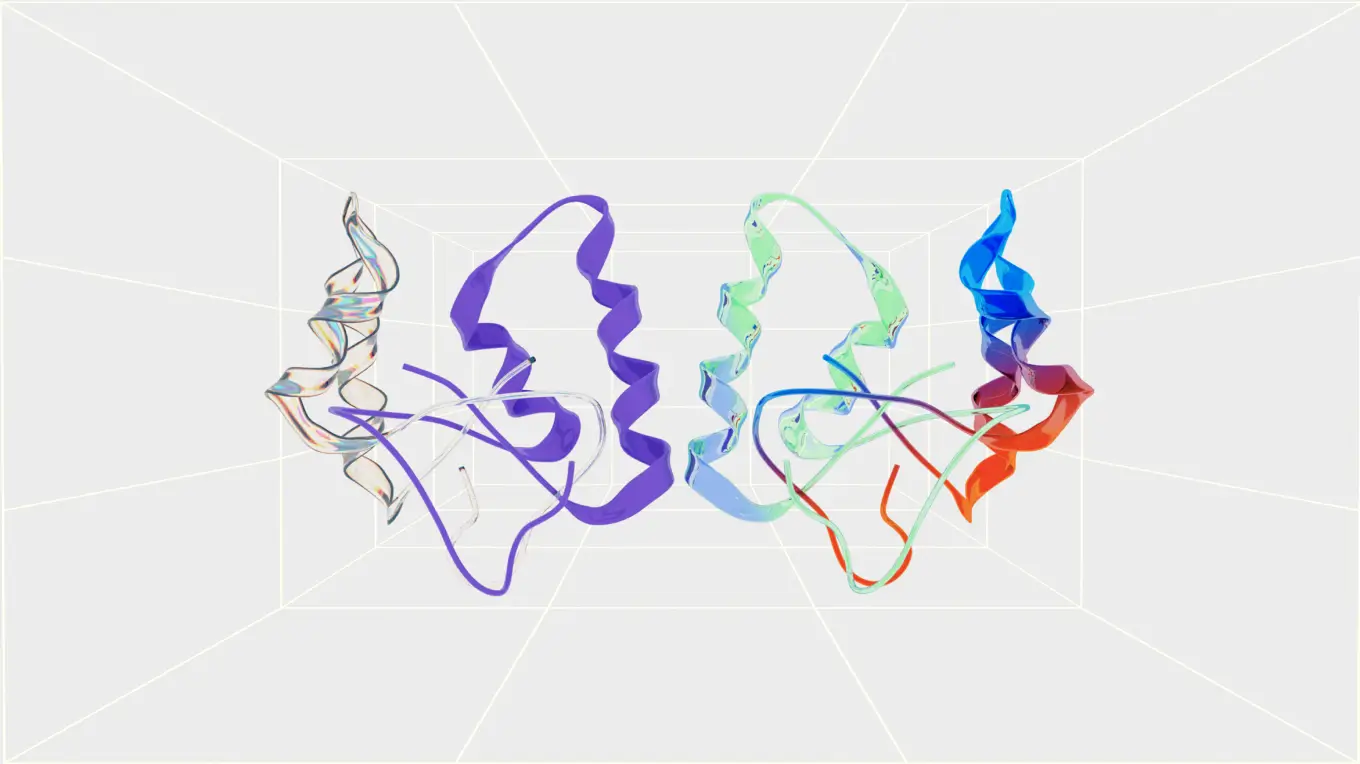

Video compression network

We train a network that reduces the dimensionality of visual data. 20 This network takes raw video as input and outputs a latent representation that is compressed both temporally and spatially. Sora is trained on and subsequently generates videos within this compressed latent space. We also train a corresponding decoder model that maps generated latents back to pixel space.

Scaling transformers for video generation

Sora is a diffusion model 21 ; given input noisy patches (and conditioning information like text prompts), it’s trained to predict the original “clean” patches. Importantly, Sora is a diffusion transformer. 26 Transformers have demonstrated remarkable scaling properties across a variety of domains, including language modeling, 13, 14 computer vision, 15 and image generation. 27

Variable durations, resolutions, aspect ratios

Past approaches to image and video generation typically resize, crop or trim videos to a standard size—e.g., 4 second videos at 256x256 resolution. We find that instead training on data at its native size provides several benefits.

Sampling flexibility

Sora can sample widescreen 1920x1080p videos, vertical 1080x1920 videos and everything inbetween. This lets Sora create content for different devices directly at their native aspect ratios. It also lets us quickly prototype content at lower sizes before generating at full resolution—all with the same model.

Improved framing and composition

We empirically find that training on videos at their native aspect ratios improves composition and framing. We compare Sora against a version of our model that crops all training videos to be square, which is common practice when training generative models. The model trained on square crops (left) sometimes generates videos where the subject is only partially in view. In comparison, videos from Sora (right) have improved framing.

Language understanding

Training text-to-video generation systems requires a large amount of videos with corresponding text captions. We apply the re-captioning technique introduced in DALL·E 3 30 to videos. We first train a highly descriptive captioner model and then use it to produce text captions for all videos in our training set. We find that training on highly descriptive video captions improves text fidelity as well as the overall quality of videos.

Similar to DALL·E 3, we also leverage GPT to turn short user prompts into longer detailed captions that are sent to the video model. This enables Sora to generate high quality videos that accurately follow user prompts.

“Example pull quote lorem ipsum dolor sit amet, consectetur adipiscing elit. Sed ut lectus tristique, feugiat erat vel, dapibus libero. Vivamus rhoncus vehicula finibus. Nulla interdum varius odio in blandit. Morbi eget elementum nisi, at euismod leo. Etiam rutrum augue vitae velit sagittis, ut bibendum nisl tempor. Pellentesque eu tempor ante.”

Training text-to-video generation systems requires a large amount of videos with corresponding text captions. We apply the re-captioning technique introduced in DALL·E 3 30 to videos. We first train a highly descriptive captioner model and then use it to produce text captions for all videos in our training set. We find that training on highly descriptive video captions improves text fidelity as well as the overall quality of videos.

Similar to DALL·E 3, we also leverage GPT to turn short user prompts into longer detailed captions that are sent to the video model. This enables Sora to generate high quality videos that accurately follow user prompts.

Prompting with images and videos

All of the results above and in our landing page show text-to-video samples. But Sora can also be prompted with other inputs, such as pre-existing images or video. This capability enables Sora to perform a wide range of image and video editing tasks—creating perfectly looping video, animating static images, extending videos forwards or backwards in time, etc.

Animating DALL·E images

- Sora is capable of generating videos provided an image and prompt as input. Below we show example videos generated based on DALL·E 2 31 and DALL·E 3 30 images.

- Sora is also capable of extending videos, either forward or backward in time. Below are three videos that were all extended backward in time starting from a segment of a generated video.

- As a result, each of the three videos starts different from the others, yet all three videos lead to the same ending.

- Lorem ipsum dolor sit amet, consectetur adipiscing elit. Sed ut lectus tristique, feugiat erat vel, dapibus libero. Vivamus rhoncus vehicula finibus.

Example pullout lorem ipsum dolor sit amet, consectetur adipiscing elit. Sed ut lectus tristique, feugiat erat vel, dapibus libero. Vivamus rhoncus vehicula finibus. Nulla interdum varius odio in blandit. Morbi eget elementum nisi, at euismod leo.

Extending generated videos

Sora is also capable of extending videos, either forward or backward in time. Below are three videos that were all extended backward in time starting from a segment of a generated video. As a result, each of the three videos starts different from the others, yet all three videos lead to the same ending.

We believe the capabilities Sora has today demonstrate that continued scaling of video models is a promising path towards the development of capable simulators of the physical and digital world, and the objects, animals and people that live within them.

Frequently Asked Questions

Acknowledgements

Citation

Please cite as Brooks, Peebles, et al., and use the following BibTeX for citation: https://openai.com/bibtex/videoworldsimulators2024.bib